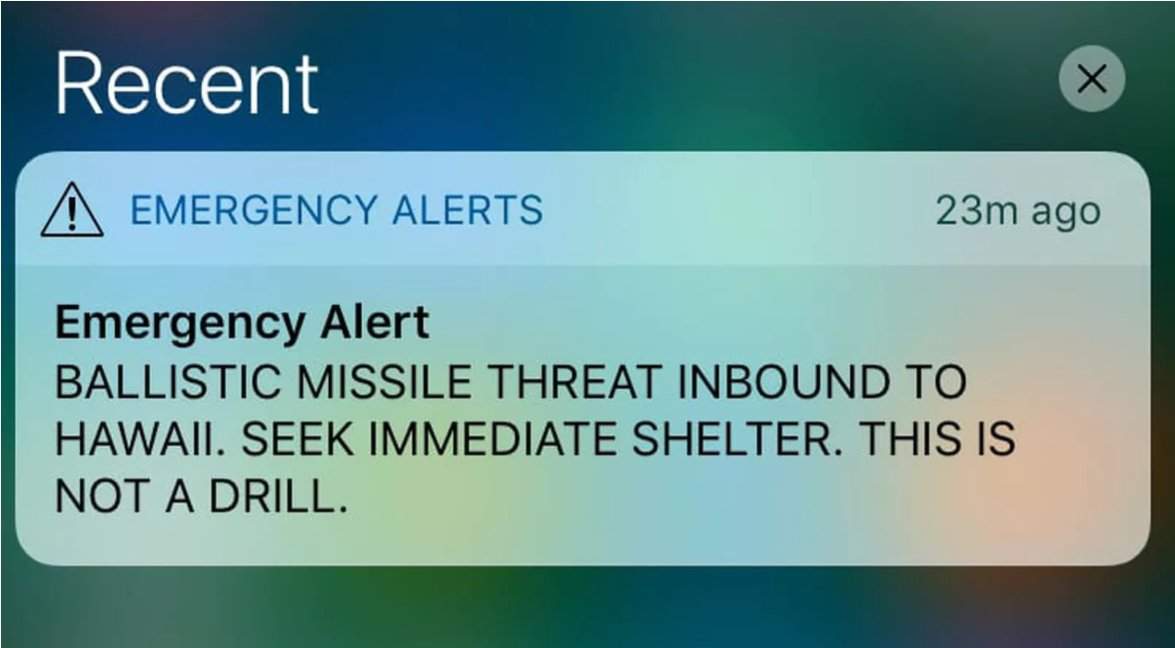

The recent ballistic missile false alarm in Hawaii made headlines around the world. It was pretty quickly revealed that it occurred as the result of an employee selecting the wrong option from a menu of different options. There were two very similarly worded options, one for a “test” alert, and one for an actual alert. He selected, and then confirmed, the wrong one. Anyone who has used a computer for even a short period of time is aware of this potential error. It is rather disconcerting that a safety critical system such as this one doesn’t appear to have utilised any Human Factors/Ergonomics input or Usability Testing.

In the aftermath of the mass panic, death threats were made against the agency involved and I was waiting for the seemingly inevitable “name, blame, shame and retrain (or worse still, sack)” response to the poor individual at the pointy end of the incident, but it was quite refreshing to instead see this response from Vern Miyagi, of the Hawaii Emergency Management Agency:

“Looking at the nature and cause of the error that led to those events, the deeper problem is not that someone made a mistake; it is that we made it too easy for a simple mistake to have very serious consequences…The system should have been more robust, and I will not let an individual pay for a systemic problem.”

I grew during the time of the Karate Kid movies so it seems fitting to see such wisdom coming from a “Mr Miyagi”. I wish more people, especially the media and politicians, would also display a better understanding of complex systems, Human Factors/Ergonomics and the importance of good design and usability testing for such safety critical systems. This YouTube clip became popular following the false alarm, and the Oprah reference takes on new meaning given the recent Oprah 202o push!